The AI Race Just Became a Resource War. Here’s Who Owns the Mine.

GPU prices surged 48% in two months. Anthropic locked its most powerful model in a box. And the company that controls the most compute isn't OpenAI — it's the one nobody's been watching.

THE NUMBER: $4.08 — the hourly rental price for a single Nvidia Blackwell GPU, up 48% from $2.75 in just two months. CoreWeave raised prices 20% and extended contract minimums to three years. For the first time since the early 2000s, the most important resource in AI isn’t talent or data. It’s electricity and silicon. The companies that own it just took the driver’s seat.

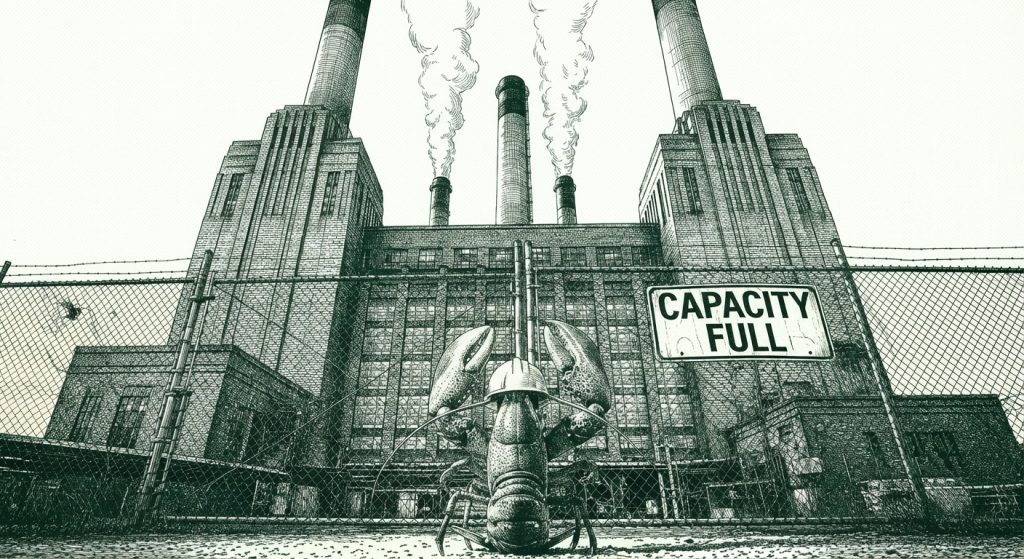

⚡ The AI industry spent three years telling you the future was about models. The smartest models. The biggest benchmarks. The most parameters. Turns out the future is about who owns the power plant.

Tomasz Tunguz dropped a framework this week that should be required reading for anyone making AI bets. Blackwell GPU rentals jumped from $2.75 to $4.08 per hour in sixty days. CoreWeave — the cloud provider Anthropic just signed a multibillion-dollar deal with — raised prices 20% and stretched minimum contracts to three years. Anthropic restricted its most capable model, Claude Mythos, to roughly 40 partner organizations. Not because they wanted to. Because they had to.

And here’s the thread nobody’s pulling: Claude Opus 4.6 reasoning depth has been declining for weeks. Not speculation — forensic evidence. AMD Senior Director Stella Laurenzo published an analysis of 6,852 Claude Code sessions and 234,760 tool calls that reads like an autopsy of what happens when compute gets rationed at the model level. Thinking depth dropped 67% by late February. The model’s Read:Edit ratio — how much it researches before making changes — collapsed from 6.6 to 2.0. One in three edits went to files the model hadn’t even read. She built a bash script to catch the model quitting early. It fired zero times before March 8. Then it fired 173 times in 17 days.

The kicker: her effort stayed flat. Same number of prompts, same human input. But API requests exploded 80x. Estimated daily cost went from $12 to $1,504. Same work in, 122 times the cost, demonstrably worse output. She went from merging 191,000 lines of code per weekend to abandoning her multi-agent architecture entirely.

Everyone assumed Anthropic was clearing the runway for Mythos. But Mythos went back in the box. So what’s actually happening?

The simpler explanation is Occam’s Razor in action: compute is now the most expensive line item in the business, and Anthropic is rationing it. The “nerfing” isn’t a conspiracy. It’s economics. And Laurenzo’s data shows you exactly what that economics looks like from the user’s chair — shallower thinking, worse results, higher bills, and a productivity miracle running in reverse.

This reframes the entire competitive landscape. Not who has the best model — who can afford to run it at full depth.

Google’s Quiet Advantage: Own the Stack, Own the Future

⚡ Demis Hassabis is suddenly everywhere. Podcasts, interviews, stage appearances. This isn’t vanity. It’s a victory lap he’s running before anyone realizes the race changed.

Google owns TPUs. Google owns data centers. Google owns the power contracts. In a world where GPU rentals cost $4.08/hour and climbing, the company that manufactures its own silicon and generates its own compute doesn’t just have a cost advantage — it has a structural moat that no amount of venture capital can replicate.

While OpenAI burns through cash renting Nvidia hardware and Anthropic signs multibillion-dollar CoreWeave commitments, Google’s cost-per-inference keeps dropping because they control the entire stack from chip design to cooling systems. Hassabis told interviewers last week that DeepMind would have focused on curing diseases instead of consumer chatbots. That’s not regret — that’s a man who knows his company can afford to play the long game because the meter isn’t running.

Meanwhile, Google is quietly testing a desktop “Agent” tab in Gemini Enterprise — task management, connected apps, human review toggles. It looks a lot like Cowork. It looks a lot like what OpenAI is trying to build. The difference: Google can subsidize it with compute that costs them a fraction of what it costs everyone else.

The signal: If you’re evaluating AI partners for your enterprise, stop comparing benchmarks. Start comparing balance sheets. The company that can afford to keep the lights on when GPU prices double again isn’t the one with the best model — it’s the one that owns the power plant.

OpenAI’s Trapped: The Memo That Says the Quiet Part Out Loud

💲 A leaked internal memo from OpenAI’s Chief Revenue Officer is the most revealing document in the AI industry this year. And the story isn’t the gossip — it’s the structural trap it exposes.

Microsoft “limited our ability” to reach enterprise clients. Amazon demand is “staggering.” And the CRO’s assessment of the competition: Claude has become “a religion” — but Anthropic inflated its revenue by $8 billion.

Set aside the trash talk. The architecture of OpenAI’s problem is what matters. Their largest investor controls Azure, which controls their compute, which controls their enterprise distribution. Microsoft takes a margin on every API call. Microsoft decides which enterprise customers see OpenAI’s products. And Microsoft is simultaneously testing its own autonomous AI agents for 365 Copilot — OpenClaw-like bots that run “around the clock” inside the Microsoft ecosystem.

OpenAI’s response? Pivot hard to Amazon. The memo describes “staggering” demand through AWS Bedrock. But Amazon already has its own models. Amazon has Anthropic on its platform. OpenAI isn’t escaping a walled garden — it’s checking into a different one.

The CFO says they’re not ready to IPO. The CEO’s home was targeted twice in 48 hours. The public face of “AI is great for everyone” is now the most controversial figure in technology. And capital — the oxygen every AI company breathes — flows to winners. When the market perceives you as the bridesmaid, the next funding round gets harder. Then the next one gets impossible.

We’ve seen this movie before. Sun Microsystems owned the enterprise in 1999. They had the hardware, the software, the clients. Then the economics shifted, Linux ate their margins, and by 2010 Oracle bought them for parts. The question isn’t whether OpenAI has good technology. It’s whether good technology matters when you don’t control your own distribution, your own compute, or your own narrative.

The real story: Follow the incentives, not the press releases. OpenAI’s CRO wrote this memo because the internal math doesn’t work. When your biggest investor is also your biggest competitor and your biggest bottleneck, no amount of model quality fixes the structural problem.

The Agent Platform War: Everyone Wants to Kill OpenClaw

🦞 Jason Calacanis said it plainly: killing OpenClaw is “the number one goal” for every major AI lab. He’s not wrong — and the offensive is already underway.

Microsoft is testing autonomous agents for 365 Copilot that mirror OpenClaw’s always-on architecture. Google leaked an “Agent” tab in Gemini Enterprise. Anthropic is building an in-chat app builder to compete with Lovable. OpenAI acquired OpenClaw’s founder. Hermes Agent is outperforming OpenClaw on benchmarks and winning user migrations. Every major platform player just declared war on the open-source agent that took the industry by storm.

This is the browser wars all over again. In 1995, Netscape gave away the browser and Microsoft bundled Internet Explorer into Windows to kill it. The playbook hasn’t changed. When a free, open product threatens platform revenue, incumbents don’t compete on quality — they compete on distribution. Microsoft can embed agents directly in Office. Google can embed them in Workspace. Apple can embed them on-device. OpenClaw’s advantage was being first and being open. But “first and open” didn’t save Netscape.

Here’s what makes this different: OpenClaw has 13,700+ skills and a developer community that’s building faster than any single company can. The SkillClaw paper this week demonstrated collective skill evolution — skills that improve across users autonomously. That’s a network effect the platforms can’t easily replicate.

But there’s a darker angle. That same community has a problem: 36% of OpenClaw skills contain prompt injections. The security story is the Trojan horse that platforms will use to justify walled gardens. “Our agents are safer because we control the ecosystem.” That’s the pitch. And for enterprise buyers — the founders and operators reading this newsletter — that pitch is going to land.

What business leaders need to know: The agent platform you pick in 2026 determines your toolchain for years. Open source gives you flexibility and cost savings but carries security risk. Platform agents give you integration and safety but create vendor lock-in. There’s no neutral choice. Pick the lock-in you can live with.

The 50-Point Trust Gap: The Crisis Nobody’s Addressing

📉 Stanford dropped its 2026 AI Index this week. One number tells the whole story: 73% of AI experts expect AI to have a positive impact on jobs. Only 23% of the public agrees.

That’s a 50-point gap between the people building AI and the people living with it. 52% of people say AI makes them nervous. 79% want mandatory disclosure when AI is involved. And while those numbers pile up in academic reports, Sam Altman’s house is taking gunfire.

The industry’s response to public fear has been, essentially, more conferences. More fundraising announcements. More benchmark celebrations. Meta is building a photorealistic AI clone of its CEO to talk to 79,000 employees — while those same employees read the OpenClaw internal push as a signal their jobs are next. The companies have become cults of true believers. And when a cult talks to the outside world, they don’t say “we hear your concerns.” They say “you don’t understand what we’re building.”

The public understands fine. They see $300,000 educations being obviated. They see careers cut short — Oracle laid off 30,000 people with a single 6 AM email. AI was cited in 25% of all tech layoffs in March, the first time companies openly blamed the technology. Q1 2026: 99,000 tech jobs gone. The public isn’t confused about AI. They’re terrified of it. And the experts are telling them they’re wrong to be.

This is the gap that gets regulated. We’ve seen this before. Nuclear power had the same perception split — the physicists said it was safe, the public said Three Mile Island. The physicists were right about the science and wrong about the politics. The result wasn’t better nuclear power. It was forty years of regulatory paralysis that killed the industry. When experts and the public disagree by 50 points, the public wins. Not because they’re right. Because they vote.

Why this matters: Your AI strategy doesn’t exist in a vacuum. The trust deficit will produce regulation — Virginia just became the first state to pass AI independence legislation, and a 291-page federal AI Act draft just dropped. Build your AI roadmap assuming the regulatory environment gets harder, not easier. The companies that engage with public fear now will shape the rules. The ones that ignore it will have rules imposed on them.

What This Means For You

The AI race just forked. It’s no longer about who has the best model. It’s about who owns the resources to run it — and who still has the public’s permission to try.

Evaluate your AI partners like you’d evaluate a supplier in a commodity crunch. Google can deliver. Anthropic is winning on product and has enterprise momentum. Microsoft can deliver but is conflicted. Apple will be able to once on-device inference matures. Even Perplexity is insulated — they rent models, not compute, and can switch providers when economics shift. OpenAI is structurally trapped. Plan accordingly.

Open source is no longer the ideological choice — it’s the economic one. When GPU rentals hit $4.08/hour and climbing, running GLM-5.1 on your own hardware isn’t a hobby project. It’s a hedge against the compute landlords. If you haven’t evaluated local inference for your use case, you’re leaving optionality on the table.

Build your agent strategy now, but pick the lock-in you can live with. The platform wars are here. Microsoft, Google, Apple, and Anthropic are all embedding agents into their ecosystems. Open source gives you freedom but carries security risk. Walled gardens give you safety but create dependency. There is no neutral position.

The trust gap is your problem too. Your customers see the same 50-point gap. Your employees see the layoff numbers. If your AI deployment doesn’t come with a clear story about what it means for the people affected, you’re not just making a technology bet — you’re making a political one.

The winners in 2027 won’t just have the most compute. They’ll be the ones who figured out that the scarcest resource wasn’t silicon. It was trust.

Three Questions We Think You Should Be Asking Yourself

If your AI provider’s costs double in the next 12 months, does your business case still work? GPU scarcity isn’t a temporary blip — it’s a structural shift. The companies that built their AI strategies around cheap, abundant inference are about to find out what happens when the assumptions change. Run the numbers at 2x your current cost. If the ROI disappears, you don’t have an AI strategy. You have a subsidy.

Do you know who actually owns the compute your AI runs on? Your vendor might be Anthropic, but your compute might be CoreWeave, which means your uptime depends on a company you’ve never evaluated. Supply chain risk isn’t just for physical goods anymore. Map your AI supply chain the way you’d map a manufacturing one. If you can’t trace it from model to chip to power plant, you have a single point of failure you haven’t priced.

What’s your story for the 50-point gap? When your board asks about AI, they’re going to start asking about the backlash too. Virginia is legislating. Congress is drafting. The public is angry. If your answer is “we’re just using AI internally,” you’re underestimating how fast the political environment moves when 52% of the population is nervous. Have a position. Because silence will be read as indifference.

Show me the incentives and I’ll show you the behavior.”

— Charlie Munger

In the AI industry, the incentive just shifted from “build the best model” to “own the most compute.” Watch the behavior change accordingly.

— Harry and Anthony

Sources:

- Tomasz Tunguz — The Beginning of Scarcity in AI

- Stanford HAI — 2026 AI Index Report

- Stella Laurenzo — Claude Code quality regression analysis (6,852 sessions, 234,760 tool calls)

- OpenAI CRO Leaked Memo via Techmeme

- UK AI Security Institute — Claude Mythos Evaluation

- Jason Calacanis — Big Tech wants to kill OpenClaw

- Virginia AI Independence Legislation — SB 384

- Microsoft 365 Copilot Autonomous Agents (The Verge)

- Google Desktop Agent Tab in Gemini Enterprise

Past Briefings

The Revolution Eats Its Children

THE NUMBER: 85.4% vs. 61.3% — VoxCPM2's voice similarity score versus ElevenLabs on the MiniMax-MLS benchmark. A 24-point blowout. The winner is an open-source model from Tsinghua University with 2 billion parameters, runs on 8GB of VRAM, ships under Apache 2.0, and costs exactly nothing. The loser is valued at $11 billion and charges a monthly subscription. VoxCPM2 doesn't just clone voices — it generates new ones from text descriptions. Describe what you want — "a young woman, gentle tone, slightly slow pace" — and it builds the voice from scratch. No recording needed. No API fee. No permission required....

Apr 9, 2026Anthropic Built the Plumbing. Meta Built the Cash Register.

THE NUMBER: $0.08 — the cost per session hour for an autonomous AI agent that can work for hours without human intervention. Eight cents for the orchestration layer. But here's the business model that matters: the real revenue is the inference underneath. Every agent session burns tokens — Opus tokens, Sonnet tokens, Haiku tokens — and Anthropic collects on every one. The $0.08 isn't the price. It's the on-ramp. Anthropic just built the cheapest toll road in enterprise software, and every car on it burns their fuel. Yesterday we wrote that the AI house needed plumbing. Then Anthropic showed up...

Apr 8, 2026The AI Industry Is Building the USS Enterprise. What You Need Is a Minivan.

Anthropic's frontier model finds 27-year-old kernel vulnerabilities. OpenAI is pitching Congress for $600 billion. Google just shipped Gemma 4 under Apache 2.0. A Chinese lab built an autonomous coder that runs eight hours without human help — on sanctioned chips, for one-fifth the price. Every company in AI is competing on intelligence. Meanwhile, the partner at a 40-person law firm in Denver just wants his contract review to work the same way on Thursday as it did on Tuesday. The AI industry has a hundred companies building the starship. Nobody is building the minivan. And the minivan is where the...