Sully: Brace for impact.

Three frontier labs killed the prompt box this week. Theory Ventures' Tomasz Tunguz killed the inbox the same morning. Jason Lemkin published a job posting for a senior demand-gen executive who would be reporting, in the org chart, to an artificial intelligence named 10K. The unoptimized layer in the loop is the only one that can land the plane.

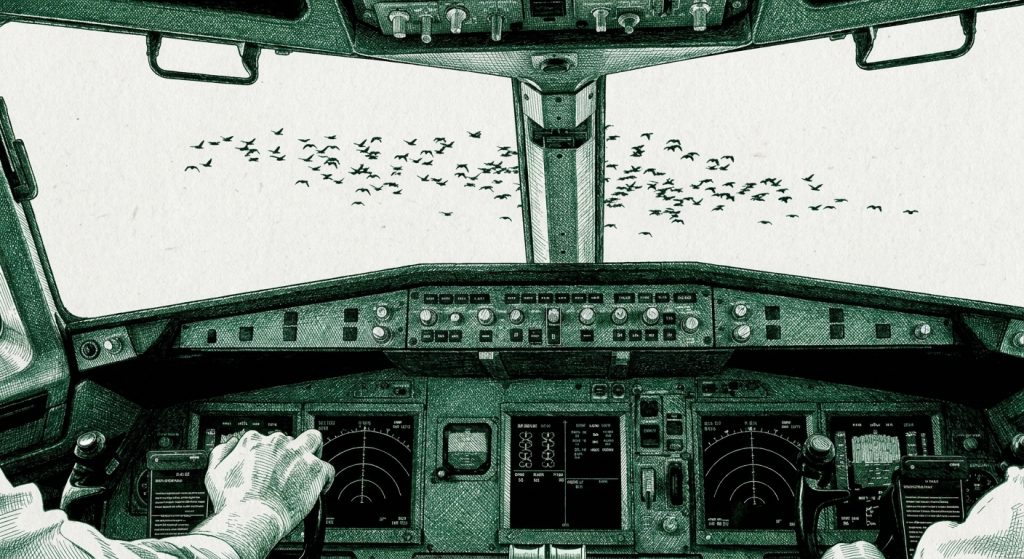

THE NUMBER: 208 seconds — the total elapsed time, from the moment a flock of Canada geese disabled both engines on US Airways Flight 1549 over the Bronx on the afternoon of January 15, 2009, to the moment Captain Chesley Sullenberger set the Airbus A320 down on the Hudson River with the gear up, the flaps half-deployed, and all 155 souls intact on the wings. The NTSB, in the year that followed, ran twenty separate simulations of the same scenario. The first batch — twenty pilots in a simulator, starting at the moment of bird strike — landed the aircraft safely back at LaGuardia or Teterboro every time. Then the board added back the thirty-five seconds Sully spent assessing the engines, calling the bird strike to ATC, running the dual-engine restart procedure, and deciding the river was the only survivable option. Once the thirty-five seconds of human thinking were re-introduced into the simulation, every attempt to make either airport crashed. “Anybody can land a plane in a simulator. The reality is, no one can do that,” Sully told the NTSB hearing room in 2009, the line that becomes the moral pivot of the 2016 Tom Hanks film and the moral pivot of the entire 2026 AI cycle. The autopilot can fly. The autopilot, with two dead engines, cannot decide.

Yesterday’s I Am Iron Man issue named the 200-millisecond threshold Mira Murati’s Thinking Machines Lab built around — the latency budget below which conversation stops feeling like turn-taking and starts feeling like presence. The piece walked the four layers of the suit: console, agent, intelligence, output. Everything that landed today landed at the console layer. This morning Google shipped Gemini Intelligence for Android, Googlebook as a new laptop category, and Magic Pointer — a Gemini-powered cursor that does not tell the computer where you clicked but what you meant. Perceptron released Mk1, a model built to consume video as a stream of events rather than a pile of screenshots. Thinking Machines’ Interaction Models — yesterday’s launch — passed 2.4 million views in under twenty-four hours. And Tomasz Tunguz, who on Sunday published the Localmaxxing essay that became one of the load-bearing data points of yesterday’s piece, published a new essay this morning called The Disappearance of Email. Its opening line: “Nobody will open Gmail five times a day in five years. Not because email is dying. Because it’s working too well.” And overnight Andrej Karpathy sketched a five-stage progression for LLM output: raw text → markdown → HTML → interactive neural video → simulations.

Five different actors. Three layers of the stack. One thesis. The text box is the bottleneck. The interface is being inverted. This is no longer a Murati product launch. By Wednesday morning of the second week of May 2026, this is the consensus pick of Silicon Valley, sketched in public, on five different blogs, in three different time zones, inside thirty-six hours.

And the moment the consensus arrives, the question stops being what’s getting optimized and starts being who isn’t.

The human in the loop, it turns out, is being optimized in three directions at once, and each direction tells a different story. The same operator gets all three at once, and the three are not the same story. They have different verdicts. They imply different careers. The brutal version and the optimistic version are both true. It depends on which seat you are sitting in.

That is what tonight’s piece is about. Three layers of the human in the loop, three frontier labs pulling on each one, and one captain in 2009 who happens to be the cleanest illustration of why the unoptimized layer still gets paid.

“My aircraft,” Sully said, twenty-eight seconds after the geese. The captain’s standard phrase for taking manual control from the first officer. Three roles begin at that moment. The piece is structured around them.

✈️ Layer One — Speed: The Human Is The Slowest Thing In The Cockpit

Start with the unflattering reading, because it is true, and because the cycle is no longer pretending it isn’t. The human is the slowest node in any modern technical stack. The fastest software loop in production in 2026 measures itself in nanoseconds. The intermediate layers — inference, network, database — measure in milliseconds. The fastest human reaction time, in laboratory conditions, is around 200 milliseconds for a visual stimulus and 150 for an auditory one. Sully’s first conscious response to the bird strike was a 1.2-second delay against the cockpit voice recorder, which is roughly the most attentive a human nervous system gets. The autopilot was running at eighty cycles per second; the fly-by-wire envelope protection at a thousand. The human is the slow part. Always.

The cycle this week is what happens when the rest of the stack stops waiting for the human.

Murati’s Interaction Models take audio, video, and text in 200-millisecond chunks — small enough that the human nervous system experiences the conversation as continuous. The model isn’t taking turns. It is processing in parallel. When you talk to it, it is already responding to the front of your sentence while you finish the back of it. Turn-taking — the protocol that has governed every human conversation in recorded history — is being deprecated. What replaces it is the thing that happens when you and the model are both inside the same 200ms window: which is, for the first time in human-computer interaction, the same window two humans inhabit when they talk.

Google’s Magic Pointer takes the same shortcut at the input layer. Until this morning, the cursor told the computer where you clicked. This morning, the cursor tells the computer what you meant. Point at a date in an email, the calendar opens. Point at a table, the chart renders. Point at a paused frame in a travel video, the restaurant pin drops on the map. The keystroke disappears. The semantic gap closes inside the gesture. The interface starts carrying part of the prompt for you. The Neuron called it before lunch this morning: the prompt box is dying.

Tunguz takes the same shortcut at the workflow layer. The inbox today is “a workflow starting line, not a destination.” Every email is just step one. Read it, decode the request, look up the calendar, draft a response, send. The human is the API. Tunguz’s framing — “the inbox isn’t being optimized. It’s being obsoleted” — is the same move Murati made at the conversation layer and Magic Pointer made at the cursor layer. Email persists as an API; the inbox disappears as an interface. Outcomes arrive; you review, you don’t process.

Three labs. One framework. The slowest thing in the loop is the human, and the rest of the loop just stopped routing through them on the way to the work.

Sully was the slowest thing in the cockpit on January 15, 2009. The autopilot in the A320 could have handled normal cruise faster than him and with less effort. The first officer, Jeff Skiles, had the airframe before the geese hit. Sully took the airframe back not because he was faster but because he was the captain — the slowest, most expensive, most legally accountable node in the entire system. And every modern aviation safety doctrine has been built around getting the slow node out of the parts of the loop where speed matters. Cockpit automation, ground-proximity warning, traffic-collision avoidance, autoland — all of it is what Murati and Google and Tunguz are now doing in software: routing the work around the slowest layer until the slowest layer is left with only the work it is uniquely good at.

This is the unflattering reading of the human in the loop, and it is the reading that costs jobs. The processing-speed layer of the knowledge worker — the marketing-ops coordinator, the support triage rep, the deck-building analyst, the junior engineer writing boilerplate — every one of those jobs is the human doing what the cockpit autopilot does between the gate and the destination. Steady-state work in the cruise phase. When the rest of the stack gets fast enough to do that work without a 1.2-second human delay attached to every step, the work routes around the human. Challenger attributed 26 percent of April’s announced job cuts to AI. DeepL cut 25 percent of its workforce; Cloudflare cut 1,100 the same week. Acemoglu’s QJE paper named the pattern historically: automation cycles target the wage-premium worker first, and the productivity gain gets eaten in the targeting.

Microsoft’s 2026 Work Trend Index put a measurement on this last Monday. Nineteen percent of the workforce sits in the “Frontier” tier — the operators who have reorganized their loop around AI and pulled away from the average. Ten percent is “Blocked” — fluent operators stuck inside companies that can’t use them. The remaining seventy-one percent is the cruise-phase worker, and the cruise-phase worker is what the autopilot is for.

So: yes. Layer one is true. The human is the slowest, least optimized node in the stack, and the stack is, in 2026, very rapidly building around them. Dario Amodei said the exact thing on the record this week, citing Amdahl’s Law: if you can suddenly write three or four times as many pull requests, you don’t get three or four times the output. You get a pile of code no one can review. That is the Jeff Dean framing this newsletter ran on April 17 — now coming from the CEO of Anthropic. In a stack with Murati’s 200ms console at the front and a swarm of Devin instances doing the writing in the middle, the human review queue is the new bottleneck. Anthropic’s own 2026 Agentic Coding Trends Report has the numbers: 98 percent more pull requests merged under heavy AI adoption, 91 percent longer review time, deployment velocity flat, 96 percent of developers don’t fully trust the output. The work piles up in the slow node.

If layer one is the whole story, the conclusion is bleak: the human is the cost center, the cost center gets optimized out, and the new operator runs the company with seven people and ten thousand agents. The conclusion is wrong. It is wrong because it is missing layers two and three. The cycle this week is not making the human go away. It is re-pricing the human, and the price is moving up at layers two and three almost as fast as it is moving down at layer one.

Sully’s airframe lost both engines at 2:27:11 PM Eastern. He had the airframe back fifteen seconds later. He landed at 2:30:39. The interesting part of his three minutes and twenty-eight seconds had almost nothing to do with how fast he could push the rudder pedals.

🧠 Layer Two — Polymath: The Human Connects What The Specialist Can’t

The piece Mario Gabriele published Tuesday night, profiling investor Cyan Banister, opens with the bee story.

“Bees are dying, humanity is doomed, and we will need robot bees to survive,” is the version of the early-2010s headline Banister calls back. Then the actual solution: a mycologist, walking through a forest, watching bee behavior, who noticed that bees foraging at a certain kind of decaying log were exhibiting fewer symptoms of colony collapse than peers in healthier-looking woods. He looked at the wood. He looked at the fungi. He looked at the bee gut microbiome. He developed a treatment from a chaga-related extract that has, in field trials, reduced the symptoms by a measurable amount.

The mycologist was not an apiarist. The apiarists, with their journals and conferences and decades of bee-specific training, had not solved it. “The polymath wins,” Gabriele writes, “not because they know everything, but because they have the tools to connect what specialists cannot.”

There is a version of this argument that lives in the kitchen rather than the boardroom. I was sitting with my wife the other afternoon — a single purple peony on the table between us — and we were talking about what AI cannot yet do. I could describe the peony for you in nauseating detail. The gradient of the violet at the petal’s edge. The way the bloom unhinges from the bud at exactly the wrong moment. The brittleness in the stem two days after it was cut. The particular saturation of color the iPhone camera cannot capture. The model can do all of that. The model can do it faster than I can. What the model cannot do is sit with the peony. It cannot have the peony on its table for an hour. It cannot watch the peony die. It cannot have the peony become the thing the spouse remembers from this particular Sunday in May. The same is true at a different scale. You can read every word ever written about the granite spire on the Argentine-Chilean border that gave Patagonia its logo. You can run every image-recognition model on every photograph ever taken of Cerro Fitz Roy. You cannot replicate what it is to stand at El Chaltén at sunrise and watch the Andean condors hold their wings against the wind that comes off the icecap. The model has read the books. The model has not stood there. Description is the model’s edge. Experience is not. The polymath premium is what an embodied lifetime of standing-in-places produces, and the cycle this week is closing the descriptive gap to zero without touching the experiential one. That is the asymmetry. The labs are racing toward a kind of hyper-realism where the output is so dense and so multimodal that it almost feels as if you have experienced what you have not. It is an extraordinary trick. It is not the thing.

This is the second layer. It is the layer the cycle is now amplifying rather than optimizing out.

The reason Magic Pointer is interesting — the reason Mira Murati’s Interaction Models matter beyond the 200ms latency number — is not that they make the input faster. It is that they make cross-domain context cheap. You point at the table in your CRM, and the model knows to query your supply chain, your inventory, your marketing CRM, your accounting ledger, your sponsor manifest, and the LinkedIn profile of the person you are about to email. Until two months ago, that connect-the-dots move was the job of a senior consultant — a McKinsey engagement, a BCG deck, a six-week study, a $400K invoice. By the time the deck arrives, the quarter is over. The polymath premium used to be the cost of the polymath. What the cycle is doing this week is making the polymath premium close to free — but only for the operator who already has the polymath instinct to know which fields to connect.

The mycologist solved colony collapse because he was holding a fungal taxonomy in his head when he walked into the apiarist’s problem. The mycologist did not have to be smarter than the apiarist. He had to be in possession of a domain the apiarist had never thought relevant. This is what Cyan Banister means by the knowledge gap between disciplines closes from decades to hours. The model can run the fungal taxonomy lookup. The model can run the bee-microbiome literature search. The model can run the chemical extraction modeling. The model cannot, yet, be the person walking through the forest on the day that the bees on the chaga-bearing logs look different from the bees in the healthy woods. Embodied multi-domain attention is still the human’s edge. The model is closing every other gap; that one is closing more slowly because that one is a function of being a body in the world over a long enough timeline to accumulate cross-domain pattern recognition.

Sully had been a pilot since he was sixteen. He flew F-4 Phantoms in the Air Force. He had been a glider instructor for two decades. He had served as an NTSB accident investigator. He had a master’s degree in industrial psychology focused on cockpit ergonomics. When the geese hit, he was not running the dual-engine emergency checklist from training — there was no time. He was reaching for the part of his nervous system that had landed gliders without power and applying it to a twin-engine Airbus that, for the next 188 seconds, was effectively a glider. The Airbus’s autopilot had no glider doctrine. The Airbus’s autopilot had no NTSB-investigator instinct that said the river is the only option that doesn’t put a wide-body into Midtown Manhattan. The Airbus’s autopilot, in fact, did exactly what the simulations later showed — it kept trying to fly the airframe like a powered aircraft, which is what eventually crashed every simulator pilot who didn’t have the polymath instinct to declare the airframe a glider and act accordingly.

The mycologist found the chaga. Sully found the river. Both of them found their answer because their training was wider than the problem they were facing. The specialist has the deeper training inside the domain. The polymath has the wider training across domains. AI is making domains shallow. Which means, on net, AI compresses the value of the specialist’s depth — and increases the value of the polymath’s width.

This is why Jason Lemkin’s SaaStr piece this morning describes the new hire’s job the way it does. He is not looking for a deeper specialist. The agents already have the depth. He is looking for the person who can run the play. The campaign that ships first. The audience that gets which version. The AI SDR sequence that syncs with which email series. The key account that gets the white-glove path. The partner co-marketing push that gets pulled this week. “10K can hand you a brilliant set of moves. You’re the one who actually runs them.” That sentence is the polymath thesis as a marketing job description. The marketer Lemkin is hiring needs five years of demand-gen experience plus the cross-domain bandwidth to weave a Salesforce sync, a HubSpot attribution rule, a Bizzabo event registration, a YouTube content drop, and a sponsor relationship into a single weekly cadence. The agents handle the depth in each of those silos. The marketer is the mycologist walking through the forest.

Prosus, one of the world’s largest builders and operators of AI agents, published its “Coming Age of AI Colleagues” report at five-ten this morning. Sixty thousand AI agents deployed across forty thousand employees in eighteen months. The most important finding — buried in the press release because it is uncomfortable — is the power law. Two percent of the agents drive a disproportionate share of business impact. Less than one percent of the agents deliver “transformative” value — the equivalent of thousands of hours of human work per month. The other ninety-eight percent do useful but ordinary work. The portfolio companies, with no central mandate, independently converged on roughly the same twenty use cases. Across different industries. Different geographies. Different languages. The two percent of agents that mattered, and the twenty use cases that mattered, were the ones a polymath operator could see needed connecting — between the customer-success queue and the sales-call transcript, between the CRM and the marketing brief, between the calendar and the supply-chain ledger. The other ninety-eight percent of agents were the apiarists doing apiarist work. The two percent were the chaga.

This is where the new operator earns the polymath premium. It is the layer where the human stays in the loop not because the human is fast — the human is slow — but because the human is the only node in the system that has read three different books and lived in three different cities and held two different jobs and noticed something on a walk through the woods on a Tuesday afternoon. The polymath layer is the part of the human that AI is amplifying rather than replacing. Every new context-bridging interaction model — Magic Pointer, Interaction Models, Perceptron’s Mk1 — is a force multiplier on the operator who already has the connections to make. It does not invent the connection. It executes the connection at a speed the human can’t execute by themselves.

Sully had two minutes and forty-two seconds between the bird strike and the landing on the Hudson. He used twenty-seven of them, by the cockpit voice recorder, on three things he had to do in parallel: confirm the engines were out, decide LaGuardia was impossible, and decide that the only survivable target was the river. None of those three decisions was inside the A320 procedure manual. All three of them came from the polymath layer of his nervous system. The seventeen-year glider instructor inside his head was the one who decided the airframe was now a glider. The F-4 pilot was the one who knew exactly how fast the airframe lost altitude without thrust. The NTSB investigator was the one who, walking the route in his head while the river came up to meet him, knew that Teterboro’s runway approach would put him over the Bronx and Hoboken at an altitude he could not survive. He had three careers’ worth of bandwidth and he used all of it in three minutes.

This is what the operator who reorganizes around AI gets, if they are paying attention. The model does the depth. The operator brings the width. The job becomes about which fields to connect, not which forms to fill out. That is layer two. That is the value layer that is going up, not down.

It is also, on its own, not enough.

⚖️ Layer Three — Taste: The Human Is The Sign-Off

Sully’s most famous line is not “my aircraft.” It is not “we’re going to be in the Hudson.” It is, in the cabin, eighty-one seconds before the splashdown, the announcement he made on the PA: “Brace for impact.”

Three words. A complete editorial decision. The captain told 154 other people, in language they would understand, what was about to happen and how to prepare. That is the third layer. Not speed. Not bandwidth. Judgment, expressed in language, with accountability attached. The captain is the only person in the cockpit who can make that announcement. The first officer can’t. The autopilot can’t. The flight management computer absolutely can’t. The act of authoring three words at that moment is the moment the human in the loop is doing the work that nothing else in the loop is licensed to do.

The third layer is the one Karpathy sketched overnight, although he sketched it from the AI side rather than the human side. His five-stage progression for LLM output — raw text → markdown → HTML → interactive neural video → simulations — is the labs’ optimization of the presentation layer. The reason the labs are building HTML and interactive video output is, as Karpathy puts it, that “about a third of our brains is a massively parallel processor dedicated to vision.” The audio in, vision out asymmetry is real. The model’s job is to present the work in the format the human review system can absorb fastest. The model is being optimized for human review, which means the model is being optimized for the human’s third layer — the sign-off.

Anthropic’s Claude Code Agent View, shipped to research preview Monday this week, is the same move at the coding agent layer. The dashboard is not for the agents. The agents do not need a dashboard. The agents are doing the work. The dashboard is for the human operator who has to decide which session needs input, which session is working, which session is done, and which session needs to be killed. The dashboard is the captain’s seat for a swarm of Claude Code instances. And it is on the screen for exactly one reason: because the act of deciding which output ships is still licensed to the human. Anthropic could ship Claude Code without an Agent View. They didn’t. They built the captain’s seat first.

Lemkin’s hiring memo names this layer with embarrassing precision: “10K can produce 50 campaign variants in an hour. You need to be the person who knows which 3 should ship and which 47 shouldn’t. We’re not looking for someone who treats AI output as gospel, and we’re not looking for someone who dismisses it. We need someone who reviews 10K’s plans the way a great chief of staff reviews a CEO’s calendar. Push back where it matters. Approve where it makes sense. Improve the output. Every day. Every campaign. Hands on.” Push back. Approve. Improve. Hands on. Every day. That is the captain’s seat as a job description.

The reason the third layer holds is not that the human reviews faster than the agent could review itself. The agent can review itself — Claude Code’s outcomes loop is precisely an agent-reviews-its-own-output system with rubric-driven self-improvement against developer-defined success criteria, which Anthropic shipped at Code with Claude last week. The reason the third layer holds is that the review carries legal, financial, reputational, and moral weight that has to attach to a person. The agent does not have a name to sign the document. The agent does not have a fiduciary duty to the shareholders. The agent does not have a customer who can sue them. The agent does not have a body to deliver the apology in person if the answer was wrong. The captain of US Airways 1549 was the only person in the cockpit whose name was on the certificate that said the airframe was airworthy and the only person whose call could be defended in front of the NTSB. When he said “brace for impact,” he was attaching his name to the decision. The first officer’s voice would not have had the same authority. The PA announcement is the act of a captain.

OpenAI understands this. The structural choice they made Monday — launching the OpenAI Deployment Company with $4 billion in initial capital, a $14 billion enterprise valuation, and a 19-firm partnership roster led by TPG and including Goldman, McKinsey, Bain, Brookfield, SoftBank, and Capgemini — is the institutional purchase of the captain’s seat. The acquisition of Tomoro brings 150 Forward Deployed Engineers into the org from day one. The pitch to the enterprise is not “we will give you better models.” The pitch is “we will put a named human inside your organization, with our brand on their business card and our service contract on their hourly rate, and that human will be the captain of your model deployment.” Goldman, McKinsey, Bain — those are firms whose entire business model is selling the captain’s seat by the hour. Their participation as founding partners is not a check. The captain layer is a market. OpenAI is now selling it directly.

The captain layer is also, importantly, the layer where the human’s value is increasing the fastest. Lemkin’s $80-a-month AI VP of marketing replaces a marketing analyst, an ops coordinator, a junior content marketer, a customer success coordinator, and a sponsor relations manager. The same SaaStr is hiring one human — a senior demand-gen director, six-figure salary, mostly remote, reports to 10K — to sit in the captain’s seat above all of them. Five jobs collapse into one. The one that remains is the captain’s seat. The one that remains is the seat that pays more, not less, than the five it replaced. The five jobs were the cruise phase. The one job that remains is the bird-strike phase.

Sully’s airframe lost both engines in cruise phase. The autopilot was flying. The first officer had the airframe. Sully had the radio. He was — in cruise phase — the most expensive, slowest, least productive node in the cockpit, paid roughly two and a half times the first officer’s rate to sit in the left seat and not touch the controls. The cycle had been optimizing pilots out of the loop for thirty years. Cockpit automation had taken roughly forty percent of the pilot’s previous workload by 2009 and the industry was actively debating single-pilot commercial flight as a viable cost-reduction. And then the geese hit. And the single most valuable node in the system, suddenly, was the captain — the slowest one, the one with the most cross-domain training, the one whose name was on the certificate. Three minutes and twenty-eight seconds later he landed the plane on the river and the entire single-pilot debate ended for a decade.

Layer three is the bird strike phase. Layer three is the phase the captain is in the seat for. Layer three is the phase that retroactively justifies the cost of having a captain at all.

🛬 The Hudson — Why The Three Layers Don’t Separate

Before the three layers fuse into one, the honest version of the captain’s seat needs naming. Sully is the exception, not the rule. Read enough NTSB accident reports and the dominant pattern in commercial aviation is the inverse of the Hudson. Tenerife, 1977 — KLM captain rolled for takeoff without clearance and 583 people died on a fogged-in runway. Air France 447, 2009 — the first officer pulled the side-stick back into a stall and held it there for three and a half minutes, all the way down into the Atlantic. Korean Air 801, 1997 — captain ignored a junior officer’s altitude callout and put a 747 into a hill. Asiana 214, 2013 — crew got confused by an automation mode on short final into SFO and let the airframe descend below the glideslope until the seawall caught the gear. In commercial aviation, the human in the cockpit is, statistically, more often the proximate cause of the disaster than the rescue. Every increment of cockpit automation added since the 1990s has been a net safety improvement because the automation has been the steadier option in cruise. This is not a defense of the human pilot. This is the honest part of the captain-layer argument: the captain is in the seat because the cruise phase has gotten safer with the human routed out of it, and because the cruise phase has gotten safer, the rare bird-strike phase has become the entire economic justification for the captain’s wage. The autopilot is paid by the 99%. The captain is paid for the 1%. Sully landed at LaGuardia three thousand times in his career without anyone writing a book about it. He landed at the Hudson once, and the book got written. The book is the entire reason the captain’s seat is still occupied at the price point at which it is occupied.

Knowledge work is heading to exactly the same place. Most days the AI VP of marketing is the safer choice for which campaign ships. Some days — one day a quarter, one day a year — the human captain’s polymath instinct catches the campaign that should not have shipped, and that’s the day that paid for the year. The cruise phase is being automated. The captain is being repriced upward for the 1%. Anyone selling you the version where this is bad news for human workers in aggregate is selling you the cruise-phase reading. The captain’s reading is the inverse.

The piece could end here. Three layers, three verdicts, the optimistic operator picks the polymath-and-taste version and gets paid for it. Done.

It is not done. There is one more thing the cycle this week made plain, and it is the thing that matters most.

The three layers are not separable. They are a single nervous system performing a single job. Sully’s polymath layer — the three careers worth of bandwidth — is what made his taste layer trustworthy. The reason the cabin crew braced 154 passengers in eighty-one seconds is not that Sully was a great public speaker. It is that, by the time he said “brace for impact,” he had personally verified — using his glider-instructor’s gut and his F-4 pilot’s altitude math and his NTSB investigator’s airport-geography map — that the river was the only outcome where the announcement was a survival instruction and not a death sentence. The taste was downstream of the polymath. The polymath was downstream of the experience. The experience required a body that had been a pilot for forty years.

The agents do not have that. Yet. Maybe ever.

What the agents do have, this week, is a console that closes the input gap (Murati), a cursor that closes the context gap (Magic Pointer), an output layer that closes the comprehension gap (Karpathy’s HTML/video), a coding swarm that closes the execution gap (Devin’s $445M ARR, Claude Code’s new dashboard, Cursor leading the first Coding Agent Index with Opus 4.7), and a wholesale services arm (the OpenAI Deployment Co, the 60,000 Prosus agents) that lets every operator with a checkbook rent the rig pre-assembled. Every layer of the stack except the captain’s seat got faster and cheaper this week.

The operator who reorganizes around that — the operator who takes the captain’s seat seriously — gets all three layers of their humanity priced correctly. The speed layer falls out of the critical path; let it. The autopilot can fly cruise. The polymath layer goes to work; that is what you read three books and held two jobs to be able to do. The taste layer signs the deliverable; that is what your name on the cert is for. Five jobs collapse into one. The one that remains is the one that pays.

The operator who doesn’t reorganize around it gets the inverse. The speed layer is still on their critical path; it’s slowing the team down. The polymath layer doesn’t activate because the agents don’t have any width-of-context to amplify. The taste layer never gets engaged because by the time the work shows up in their inbox, the captain’s-seat decisions have already been outsourced to a $14B Deployment Company partner from McKinsey. The middle layer of the org chart is what gets cut, and the operator who didn’t take the captain’s seat seriously becomes the middle.

This is the part of the story that connects backwards to Monday’s Lucy. The Ascend COO, Omar Ismail, did not 38-percent his ARR because Claude Code is better than a marketing team. He did it because he sat in the captain’s seat and ran the captain’s-seat moves — the ICP analysis, the brand reset, the segmentation calls, the campaign sequencing, the daily review cadence. The agents in the suit did the cruise-phase work; the captain did the bird-strike-phase work and the sign-off. The 38 percent is the captain premium. Microsoft’s Work Trend Index said the same thing in the boring language of statistical analysis: 67 percent of the variance in AI outcomes is organizational, 32 percent is individual. The org chart variable is whether the captain’s seat is occupied. The individual-fluency variable is what the captain in that seat does with the rig once it’s running.

This is the part of the story that connects forward to the day after tomorrow. The captain layer is unionizing. Every major lab is now optimizing toward it: Lemkin’s hiring memo (today), Prosus’s 60K agent playbook (today), OpenAI Deployment Co + Tomoro (Monday), Anthropic Code with Claude harness (last Wednesday), Microsoft Work Trend Index (last Monday). The mid-cycle pattern is clear: every lab is shipping the rig, and every major institutional partner is positioning to sell the captain, and the entire knowledge-worker stack is being re-priced around which seat you’re paid to sit in.

The Sully metaphor is doing one more thing, and it is the thing that makes the piece worth writing tonight. When the NTSB ran their twenty simulations of US Airways 1549 and every simulation landed at LaGuardia, the world’s reaction was Sully cost lives by ditching the plane in the river. The Today Show had the story. Op-eds were written. The captain who had landed 155 people on the wings was being publicly second-guessed by the same regulator that had certified him to fly the airframe. Sully sat at the NTSB hearing in 2009 and asked the question that ended the conversation. Did your simulator pilots know, going in, that the engines would fail? They had. Did they get to start the simulation right at the moment of failure? They had. Did the simulator model the time required to recognize the situation, run the engine restart procedure, make the radio calls to ATC, and decide on a target airport? It had not. The board added the thirty-five seconds. Every simulation crashed.

The simulator could optimize layer one — the speed layer — perfectly. It could not model layer two or layer three at all. Without the captain’s thirty-five seconds, the autopilot lands every time. With the captain’s thirty-five seconds, the autopilot crashes every time. The captain’s value, measured in the simulator the regulator built, was negative. Measured in the river, where the geese actually arrived, it was 155 people on the wings of an Airbus.

The labs this week are the simulator. The operator next year is in the river.

🎯 The Operator Memo

A confession before the three actions, because the memo applies to the desk that writes it. Reading Lemkin’s posting this morning, I had the small, uncomfortable, faintly flattering realization that he was describing my role. I am the polymath in this equation. I read the cycle. I connect the threads. I ask the questions. The model verifies the connections and fills in the blanks. The CO/AI desk is the SaaStr org chart at a smaller scale, with the same wiring. The captain still calls the tack.

Three things to do, before the next news cycle gets here.

One. Audit your speed layer — every workflow in your business where the human is doing cruise-phase work and the rest of the stack is waiting. Get the human out of the critical path. That doesn’t mean fire them; it means re-route the work. Murati’s console, Tunguz’s inbox-as-API, Google’s Magic Pointer — these are not products you “evaluate.” They are the new wiring. The operator who is still asking whether Claude Code can replace a junior engineer this quarter is the operator who, in 2009, was still asking whether GPS was reliable enough to fly approach into LaGuardia. The wiring is the cruise phase. The wiring is not where you spend your week. Spend the week on the bird-strike phase.

Two. Map your polymath layer. Where in your business does a connection across two disparate fields produce 10x the value of either field in isolation? For Sully it was glider-instructor logic plus airport geography. For Cyan Banister’s mycologist it was fungal taxonomy plus bee behavior. For Lemkin’s new hire it is Salesforce attribution rules plus sponsor relationship management plus the daily content cadence. For Ascend’s Omar Ismail it was customer-language transcripts plus paid-Meta ICP modeling plus the HubSpot 22-branch attribution engine. Find the connections you make that the specialists in each field don’t make. Those are the workflows you put the agents on first. The agents will amplify your width. The agents will not invent it.

Three. Take the captain’s seat. Write your own brace-for-impact protocol. What are the three things, in your business, that nobody but you should sign off on? Not because the model isn’t capable. The model is capable. Because your name is on the cert. The hiring decision that puts a wrong person in a critical seat. The pricing decision that locks in a customer for the year. The product decision that decides which 3 of 50 ship. The sponsor email that lands at 12:23 a.m. The campaign that pulls 47 percent of the quarter’s revenue. Those are the captain’s seats. Those are the seats that, for the next eighteen months, will be the seats that pay. Lemkin wrote his out and put it in a job posting. Your version of that posting is the document that decides whether you spend Q3 in the captain’s seat or in the simulator.

The cycle is converging fast. Trump landed in Beijing this morning with Jensen Huang on Air Force One — chips are now a presidential-level negotiation, and Anthropic reportedly refused China access to its newest model the same week. Anduril doubled to $61 billion on a $5 billion raise before lunch — defense AI is now the largest institutional capital round in sector history. Google’s Threat Intelligence Group confirmed Monday the first AI-developed zero-day caught in the wild, which the Groundhog Day piece on Sunday said was coming. Every part of the stack except the captain’s seat is being optimized this week. Which seat are you in.

🌊 The River

Sully Sullenberger landed at 3:30 PM Eastern, after thirty-five seconds of thinking that none of the simulators modeled, after three minutes and twenty-eight seconds in command of an airframe with no thrust, after a forty-year career that included more glider hours than most pilots ever fly. The ferry boats arrived in four minutes. The first one was the NY Waterway’s Thomas Jefferson. Brittany Catanzaro, twenty years old, was the captain. She had taken the wheel from her senior captain when the call came in. The senior had let her have it. She brought the boat to the wing of the floating Airbus and her crew pulled the passengers off in thirteen minutes.

Two captains, one river, twenty minutes from the geese. The slowest, most expensive, most legally accountable node in each of those two systems was the node that mattered. The cycle this week shipped the consoles and the cursors and the inbox replacements and the agent dashboards. The cycle this week did not ship a substitute for Sully’s thirty-five seconds. It did not ship a substitute for Catanzaro’s instinct to nose the ferry into the wing.

The thirty-five seconds are still in the seat. Sit in it.

Brace for impact.

— Harry DeMott, CO/AI

Past Briefings

I Am Iron Man

THE NUMBER: 200 milliseconds — the latency budget Mira Murati's Thinking Machines Lab chose for its first product, Interaction Models, released to research preview this morning. Two hundred milliseconds is the unit her system uses to ingest voice, video, and text in streaming chunks. It is also, not coincidentally, the threshold below which human conversation stops feeling like turn-taking and starts feeling like presence. Sub-200ms is when latency disappears. You stop waiting for the machine. You start talking to it. The same number, named at the intelligence layer of the stack rather than the interface layer, is what Tomasz Tunguz...

May 11, 2026Lucy

THE NUMBER: 38 and 0 — ARR growth at one mid-market portfolio company over six months, and the number of growth hires required to produce it. The COO of Ascend (formerly FlyFlat), Omar Ismail, walked into a $20M ARR premium travel concierge with 650+ clients and roughly 95 percent of revenue coming from word-of-mouth. Six months later, January was Ascend's best month on record — $27.6M ARR. ROAS at month two ran ~5x, projecting 8-10x as pipeline matures. Cost per Meta lead $42-45. MQL→booked-call rate 48.7 percent. Bessemer published the full Atlas case study this afternoon. The entire growth engine...

May 10, 2026Groundhog Day

THE NUMBER: 8 days — the gap between two deterministic Linux root exploits this past week. Copy Fail (CVE-2026-31431) was disclosed on April 29. Dirty Frag (CVE-2026-43284) was disclosed on May 7, and its discoverer was explicit that he had built it on the bug class Copy Fail introduced. Two root primitives, eight days apart, the second engineered on top of the first by a human researcher armed with the same kind of LLM tooling that found the first. The 90-day disclosure window the security industry has been running on since the early 2000s was built for a world where...