Artemis II Just Launched. Your AI Can’t Get You There.

The hardest science on Earth still doesn't trust AI — and the gap between what we've built and what we've forgotten should keep every operator up at night.

THE NUMBER: 53 and $0

Fifty-three years since humans last traveled beyond low Earth orbit — Apollo 17, December 1972 — and zero dollars: what every AI agent replacing a human worker contributes to Social Security, unemployment insurance, and Medicare. One number measures how long we forgot. The other measures what we’re choosing not to fix.

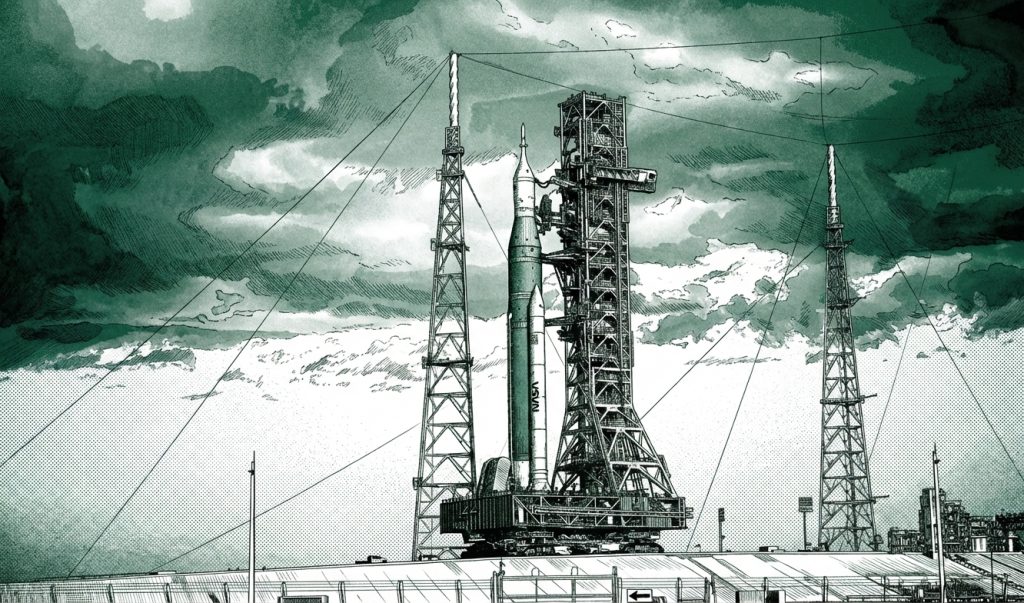

By the time you read this, four astronauts should be hurtling toward the moon.

Artemis II launched yesterday from Kennedy Space Center — the first crewed mission beyond low Earth orbit since Nixon was president. Reid Wiseman, Victor Glover, Christina Koch, and Jeremy Hansen are on a ten-day free-return trajectory that will carry them farther from Earth than any human has ever traveled. Glover is the first person of color to leave low Earth orbit. Koch is the first woman. Hansen is the first non-American. They’re not tourists. This is not Mrs. Bezos and her girlfriends up for a friendly ride. This is serious science — science so old we forgot what it took to get us there.

And while they’re out there, the rest of us will be sitting at our desks, arguing about whether Claude hallucinates less than GPT, while Citigroup eliminates 20,000 positions, JPMorgan builds its real headquarters in Texas, and Apollo Global shops for a second HQ in Nashville or Miami because — and this is a direct quote — “New York does not have a monopoly on talent.”

We are living through two simultaneous revolutions. One puts humans on the moon again. The other replaces them at their desks. And the thing connecting them is something nobody wants to talk about: we are forgetting how we built the things we depend on, at the exact moment we’re handing the keys to machines that never learned in the first place.

Look Up

I want to talk about awe for a minute. Because we’ve lost it.

We take it for granted that we can replace a knee. Cure diseases that killed millions a generation ago. Plumb the depths of the ocean. Send a camera to the edge of the observable universe — my daughter’s boss designed the imaging system on the James Webb Space Telescope. Her design. Human ingenuity. The pictures it sends back make you feel like you’re looking at God’s rough drafts.

My daughter is a rocket scientist. I couldn’t be prouder of her. She was inspired by a trip to the Kennedy Space Center as a kid, pursued engineering, got a master’s, and now works on the thermal systems of solar satellites. To her and her colleagues, Artemis is a HUGE deal. Not a news cycle. Not a content opportunity. A huge deal.

She doesn’t use AI.

Not because she’s a Luddite. Because it’s not precise enough. The tolerances required for satellite thermal systems and manned spaceflight don’t accommodate hallucination. You can’t have wishful thinking in orbit. There’s no “close enough” when the margin for error is measured in microns and the cost of failure is human life. You need redundancies — and then more redundancies. The kind of redundancies that only come from knowledge that was earned, not downloaded.

When Elon Musk was blowing up rocket after rocket on the launch pad in Boca Chica, a lot of people laughed. Schadenfreude, mostly. But Musk was earning the knowledge — the hard, expensive, brick-by-brick kind — that allowed SpaceX to launch roughly 75% of all orbital missions worldwide last year. That earned knowledge is what let him catch a returning booster in a pair of mechanical arms. I don’t care how many times you’ve seen the video. Watching that will never get old. It is science and engineering at its most magnificent.

So when we send four people around the moon on a vehicle that will break the distance record set by Apollo 13’s emergency trajectory, we should stop for a second and feel something. Not analyze it. Not monetize it. Feel it.

The Amnesia

Here’s what worries me.

The Apollo program employed 400,000 people at its peak. The institutional knowledge those engineers carried — the hard-won, failure-tested, built-from-slide-rules understanding of how to get humans to the moon and back — did not transfer cleanly to the Artemis generation. As one analysis put it: the Artemis architects don’t know basic mission parameters that were second nature to their Apollo predecessors. We built it with slide rules. Then we forgot how.

This is not a space problem. This is a civilization problem. And AI is accelerating it.

Nobody earned the knowledge they access through a Claude session or a Google search. They didn’t build on it brick by brick, error by error, until one day it snapped into place. The totality of human knowledge is available 24/7/365 from a device in your pocket, and nobody stops to think about how astonishing that is. Three billion phones on this planet. Every one of them connected to an intelligence layer that no single human built or fully understands.

Look at handwriting. Nobody can write anymore. Nobody has to. We stopped writing checks, stopped writing letters, and soon we won’t even type — we’ll just speak. And when Musk perfects Neuralink, we won’t need to speak either. We’ll communicate at higher bandwidth, direct brain-to-machine. We obviously gain enormously from each of these leaps. But what do we lose?

Spoken poetry? The feel of a pen on paper? Music made by hands, not prompts? These aren’t sentimental questions. They’re structural ones. What, that is uniquely human, is worth keeping? And who decides?

Andrej Karpathy — one of the most respected AI researchers alive — recently admitted he’s “starting to atrophy his ability to write code manually.” The people building the tools are losing the underlying skills. When the toolmakers forget how to work without their tools, you have a dependency with no fallback. In orbit, they call that a single point of failure. On Earth, we’ve come to call this progress.

The Reckoning

Now look down from the moon. Look at what’s happening on the ground.

Citigroup is eliminating 20,000 positions as part of a restructuring that CEO Jane Fraser described with the warmth of a performance review: “We are not graded on effort.” JPMorgan Chase now has 31,000 employees in Texas versus 24,000 in New York. Apollo Global Management announced last week that it’s building a second headquarters in Austin, Nashville, or South Florida. Their statement: “New York does not have a monopoly on talent, and we expect most of our future growth will take place in our second HQ.”

This is a trend that is not going to stop. High-paying knowledge work is leaving the cities that built it. And into the vacuum steps a workforce of AI agents that never commute, never negotiate, never organize, and never — this is the part that should alarm you — pay a dime into the systems designed to catch the people they replace.

Social Security is funded by payroll taxes. So is unemployment insurance. So is Medicare. Every human worker who gets replaced by an agent that processes invoices, writes code, drafts contracts, or routes information is a worker whose payroll contribution disappears. The tax base erodes as the workforce shrinks. The obligations don’t. And it is exactly these old obligations — pensions, Medicaid, infrastructure debt, public employee contracts — that make New York, Illinois, California, and New Jersey the most expensive places to do business on earth. It’s why the states never tire of taxing their residents. Until their employers say no more. Until Citigroup, JPMorgan, and Apollo pack up and head for Nashville, Dallas, and Miami, where the obligations are lighter and the math works.

Nobody in Washington is solving this. Nobody at the state level is solving it either. And honestly — I’m not sure I want any of them to. Government has a remarkable track record of making things uniformly worse when it intervenes in technology transitions it doesn’t understand.

Here’s what I’d do instead. Tell the AI companies — the ones posting $2 billion in monthly revenue and raising $122 billion in a single round — that they’re going to contribute more in taxes, earmarked for one thing: universal AI education. Give every person in the United States a free account. One account, any model. And pair it with AI training for everyone — from the very youngest to the most elderly, and everyone in between.

There’s always an argument, fomented by one side or another, that the playing field is tilted. Well, AI is an unbelievable leveler. A kid in rural Mississippi with a smartphone and a free Claude account has access to the same intelligence layer as a partner at Goldman Sachs. Anyone can do anything now. We should be encouraging that — loudly, aggressively, with the same national purpose that put men on the moon in the first place.

Give people access to the trajectories of the Apollo program and let AI walk them through the science, step by step — the orbital mechanics, the materials engineering, the life support calculations that kept three men alive in a tin can 240,000 miles from home. Let a fourteen-year-old in Topeka sit with Claude and understand, really understand, why the heat shield had to be exactly that thickness and not a micron less. The vast majority won’t care. But the few who do — who feel that spark the way my daughter felt it at Kennedy Space Center — those are the next generation’s innovators. And right now, we’re not even giving them the chance.

Use this moment. Use the awe. Get people enthused instead of terrified.

The Interface

There’s one more thread I want to pull.

I remember watching the SpaceX Dragon capsule dock with the International Space Station a few years ago and being struck by the interior of the crew cabin. It looked like a Tesla. Touchscreens. Clean lines. Minimal controls. Compare that to the Apollo capsule — a thousand switches, dials, gauges, and circuit breakers covering every surface. Every parameter visible because every parameter might kill you.

The Apollo interface was designed for a world where the human needed to see everything. The Dragon interface was designed for a world where the system decides what you need to see. Same mission. Radically different answer to the question: what does the person in the seat actually need right now?

That’s the question every software company, every enterprise platform, every AI product is about to confront. Steve Jobs took the fixed buttons off the phone and gave us dynamic interfaces per app. The next move — and I believe this is coming fast — is taking the fixed interface off the app entirely. Custom surfaces, built on the fly, for whatever decision you’re making right now.

If I’m planning a family vacation and my choices are Jackson Hole, Cabo San Lucas, or Sedona — a real test case in our house — the ideal interface gives me cost, flight times from two cities, hotels, activities, weather probabilities, and a few visuals. That’s it. Salient information, decision criteria, potential outcomes, relative weightings. Make the decision, move on.

If you’re flying a spacecraft, the information that matters changes every second. You want the highest-value signal in front of you, and only that signal. That’s what a smart router does — prioritizes packets. That’s what Jack Dorsey and Roelof Botha argued for in their manifesto on replacing corporate hierarchy with AI. That’s what the Dragon capsule figured out. The interface should serve the decision, not the system.

We’re going to stop drowning in walls of text and option paralysis. The technology that’s smart enough to replace a knowledge worker is smart enough to show you only what you need to see. The companies that figure this out — the ones that build the Dragon capsule, not the Apollo dashboard — will own the next decade.

What This Means For You

Artemis II is in the air. And on the ground, the world is rearranging itself around whoever understands what this moment actually is.

Audit your dependencies. If your business runs on knowledge that lives in people’s heads rather than in systems, you’re one resignation away from your own Apollo-to-Artemis gap. The companies that survive technology transitions are the ones that encode institutional knowledge before they lose it.

Think about the tax math. Every agent you deploy saves labor cost. It also removes a payroll tax contribution from a system your employees will eventually need. This isn’t a moral argument. It’s an actuarial one. The policy is coming, whether from Washington or from the states. Get ahead of it.

Redesign the surface, not just the engine. The AI capability is already ahead of what most interfaces can deliver. If your users feel overwhelmed or underwhelmed, the model isn’t the problem. The interface is. Build for the decision, not the dashboard.

The moon is up there. Look at it tonight. Four people are on their way.

Three Questions We Think You Should Be Asking Yourself

What institutional knowledge in your organization exists only in people’s heads — and what happens when those people leave? The Apollo-to-Artemis knowledge gap took 53 years to reveal itself. In a company with 18-month average tenure and AI tools that make junior employees feel more capable than they are, your gap could surface in quarters, not decades.

If every agent you deploy paid a 15% payroll tax, would the ROI still work? Run the math now, before policy forces the question. If the economics only work because agents are tax-exempt labor, your cost advantage is borrowed, not earned. Citigroup, JPMorgan, and Apollo are already making geographic bets on where the post-AI workforce lives. What’s yours?

Are you building Apollo dashboards or Dragon cockpits? Your product shows users everything because your engineers built it that way. But the Dragon capsule proved that showing less — showing only what matters right now — is the harder design problem and the better answer. Is your interface serving the user’s decision, or your system’s architecture?

The chatbots empowered me as an individual to act with the power of a research institute.”

— Paul, who used ChatGPT to create an mRNA vaccine protocol that saved his dog Rosie, as shared by Sam Altman

Technology is awe-inspiring. It can also be terrifying. Both of those things are true at the same time. The downstream effects of technology are life-changing, and the pace of that change is accelerating. But every once in a while — ideally in the next ten days — we should look up from our computers, look up at the moon, and remember that the same species arguing about prompt engineering just sent four of its bravest members on one of the longest crewed voyages in human history. A prelude to a base on the moon. A prelude to Mars.

Don’t forget to look up.

— Harry and Anthony

Sources

- NASA Artemis II Mission Page

- NASA Artemis II Launch Day Updates

- Apollo 17 — NASA

- Apollo Global Management Second HQ — Bloomberg

- JPMorgan Chase Makes Dallas HQ Relocation Official

- Citigroup CEO Jane Fraser on Job Cuts — Fortune

- Citigroup WARN Notice — Crain’s New York Business

- We Built It With Slide Rules. Then We Forgot How. — Daily Feed

- Sam Altman on X — Paul’s mRNA Vaccine Story

- Andrej Karpathy on Code Atrophy

- Jack Dorsey & Roelof Botha — From Hierarchy to Intelligence — Sequoia Capital

- Stripe’s AI Minions Ship 1,300 PRs Weekly

Past Briefings

Block, Anthropic, and Stripe Just Showed You What Offense Looks Like. Your Competitors Aren’t Ready.

THE NUMBER: 1,300 — pull requests shipped per week by Stripe's AI agents, with zero human-written code. Not copilot-assisted code. Not AI-suggested code. Agent-written, human-reviewed, production-deployed code. That's not a productivity story. That's an entirely new operating model — and Stripe isn't the only one running it. On February 27, we wrote about Jack Dorsey firing 4,000 people at Block and the stock going up. We called it "The Dorsey Playbook" — AI-enabled layoffs as a market-positive announcement. We were right about the signal. We were wrong about the scope. Yesterday, Dorsey and Roelof Botha — managing partner at Sequoia...

Mar 30, 2026The Intelligence Grid

THE NUMBER: 3 — the number of competing AI labs whose models Microsoft now orchestrates inside a single product. On Sunday, Satya Nadella introduced Critique — a multi-model deep research system built into Microsoft 365 Copilot. Claude generates a research report. Then ChatGPT fact-checks and improves it. Or vice versa. The company that owns 24% of OpenAI just publicly admitted that no single model is best at everything. That's not a product update. That's a confession — and a blueprint. We wrote yesterday about Apple building the consumer routing layer for intelligence — Siri as a toll booth between 1.52...

Mar 29, 2026Everyone’s arguing about who builds the best AI model. That’s the wrong race. The winner of the AI era will be whoever builds the best router.

THE NUMBER: 1.52 billion — the number of active iPhones in the world right now. One in four smartphones on Earth. A 92% user retention rate. Nearly 70% of all global consumer app spending. And as of last week, every single one of them is about to become a switchboard for artificial intelligence. Apple doesn't need to build the best model. It just needs to decide which model to call — and that decision, made 1.52 billion times over, is worth more than any model ever will be. A few weeks ago, we published a piece called "Elon Musk Is...